Like the fine folks at the New York Times Privacy Project, I often find myself wondering whether it’s more useful to (a) explain why cargo-cult privacy efforts, or educational initiatives that empower individuals as individuals, as destined to fail, or, (b) actually provide individuals with information that is useful for them. In the end, it’s a dialectic. Nothing will get better until people make mindful and different choices about their daily interactions inside the digital domain, choices that reinforced by choices made in the political realm to empower institutions or regulators to try and shape the battlefield in a way that reduces our exposure to the more damaging aspect of the surveillance-capital-attention economy.

One source of tension is that it is often NOT useful to provide regular users with too much information, lest they be overwhelmed with information, even as I try and remind students, though, that even small changes can echo loudly. (I encourage them to share their knowledge, which, I suppose, is my bow towards collective action).

Ars Technica’s coverage of the SHA1 cryptographic hash algorithm is a case in point. Yet again, SHA1 was successfully attacked. (How? Why — I am not going to begin to try and explain because I don’t understand myself, but nerds, have at it.). SHA1 was widely used by certain privacy tools to verify the authenticity of the hash associated with user identities. SHA1, it turns out, is vulnerable, and folks began to phase out its use. But even armed with the knowledge that the protocol has flaws is insufficient to do much of anything, because:

Git, the world's most widely used system for managing software development among multiple people, still relies on SHA1 to ensure data integrity. And many non-Web applications that rely on HTTPS encryption still accept SHA1 certificates. SHA1 is also still allowed for in-protocol signatures in the Transport Layer Security and Secure Shell protocols.

In a paper presented at this week’s Real World Crypto Symposium in New York City, the researchers warned that even if SHA1 usage is low or used only for backward compatibility, it will leave users open to the threat of attacks that downgrade encrypted connections to the broken hash function. The researchers said their results underscore the importance of fully phasing out SHA1 across the board as soon as possible.

So, if I’m teaching non-technical folks how to set up PGP or to collaborate over Git, should I even mention SHA1? I’d say: no.

I’ll stick with practical advice. Geoffrey Fowler recently discovered Jumbo, an app that helps users clean up their Google and Facebook and Twitter privacy settings without having to labor through those sites’ fairly onerous and sometimes confusing account security pages. This does not even beg the question: if an app can change a lot of stuff at once, why won’t Google, Facebook and Twitter, not to mention Amazon, make it not just easier but intuitive and simple to change a category, of, say, invasive tracking protocols, all at once? You know the answer.

What I like about Jumbo is that is explains the trade-offs involved in turning off and on these settings.

Incidentally, I thought I had scrubbed my GFTA (Google, Facebook, Twitter, Amazon) settings thoroughly. I installed Jumbo and discovered I had not. And even with Jumbo, Instagram continues to target me invasively and my various personal musings find their way into ads that thread across my browsing. Jumbo is a good start, though. It’s going to become part of my initial privacy toolkit.

Over the holidays, I read the Electronic Frontier Foundation’s investigation into corporate online surveillance, and I learned a few things. There are at least 9 digital identifiers that first-person and third-person trackers can use to personally identify “you” as the subject of their targeting.

Five of them are enmeshed in the way that web browsing and interfacing works, and four of them are built in to the process that superintends the function of our phones. (First-person tracking occurs when you are doing something directly with a site or platform; “third-person” tracking collects information that your devices and web interactions must emit in order to function on the sites you need to connect with; that is, they leach off of your detritus. You have not chosen to interact with third-person trackers.)

9 ways! Can you, or should you, try to turn off all of them? No. But maybe — maybe — a good approach to teaching digital security would be show students what those 9 identifiers are used for, how they are collected, who collects them, and them give them the tools to turn them off, as warranted, when situations call for it.

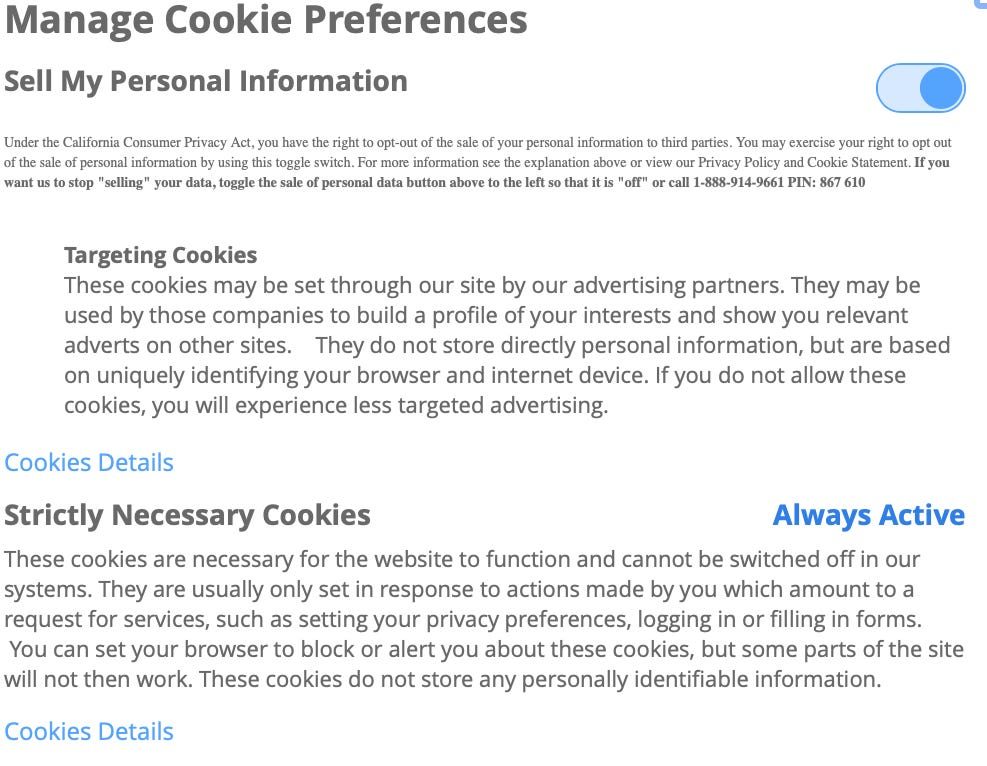

As of Jan 1, California’s Consumer Privacy Act imposes new requirements on sites that collect information on you to use for advertisements. Some of these sites want to make sure that folks know what will happen if they toggle off ad-tracking. Here’s one bit of “context”: