The Rosacea Diaries: Misinformation In Microcosm

An Approach to Countering Misinformation On Chat Apps

Like 3 million Americans, I face the star light with a cosmetic skin condition called rosacea, where blood vessels close to the skin swell and rupture. For some people, it can be painful and even debilitating. Luckily, for me, it is only unsightly. Still, looking ahead to the day when I can see friends again, I wanted to get treatment.

So, in an effort ot to be frugal, I did what any 42-year-old man would who’s looking for a way to reduce the redness: I went on TikTok.

Lots of people have searched for health information on TikTok. Videos with a #Rosacea hashtag have been clicked on 82.3 million times. I

started to scroll down. A number of the most popular videos came from people who purported to be MDs. That’s good.

I scrolled a little bit. One of the post popular videos, produced by a teenager here in California, offered me this bit of advice:

“Apply activated Charcoal solution to the area.”

Now, being cautious, and curious, I decided to consult with a dermatologist.

Let’s call him… James, because his name is James.

When I spoke to James about my rosacea, he told me in authoritative tones NOT to put charcoal on my face. Then he told me a story:

He, too, had been on TikTok, eager to see if, as a relatively young man, he could correction some of the misinformation about skin health care that was proliferating

In the comments section of one of the Tiktok videos he saw, James told me he was directed to a link that took him to a Telegram group, which had about 500 users, he said, mostly teenagers, from all across the world.

Telegram is one of the most popular and growing chat apps in the world. Individual conversations can be encrypted, but group chats, at least as of this writing, are secure at the transport level only, and they’re stored in its cloud, unlike WhatsApp and Signal, which processes encrypted messages stored on your device (or in your own cloud space.)

James thought to himself: Hey, I have a captive audience And I’m a doctor.. I can maybe spend some time and use my influence to steer people to the right treatments, stuff that isn’t going to be dangerous.

He told me tried providing information at first, and was ignored.

Group dynamics are important, he noticed. The loud voices who posted a lot tended to get the most engagement.

James tried telling the group that he was a doctor. And he even showed them his professional website.

He says he was then told by one of the powerful members of the group that doctors were too inexpensive, and these amateur skin care tips worked, besides.

In mounting frustration, he tried an appeal to the ethics of the platform. This is a chat group. This is not where you get information from. Well, it wasn’t where people like James got his information from… but like it or not, it was where a lot of these teenagers did.

Finally, James said flatly: look: you guys are spreading misinformation. It’s harmful..and you should stop.

He was then booted from the group.

I doffed my counter-misinformation practitioner cap and started thinking.

I asked James whether any of his posts attracted any attention at all. A few. Some people thanked me for them… Well, let’s think a little about group dynamics. If the goal here is to persuade people to change their behavior so they don’t hurt themselves, maybe you could have tried a different approach: what if you had taken the group of people who liked your posts., thanked them, and built a rapport with them, communicated privately your concerns about other posts, so you could build a team of folks who want to cleanse the group of harmful misinformation?

Or… could James gave approached the people who were posting the most misinformation and intervened privately on a side channel?

Marc, he told me. I don’t have time to do that.

Which is absolutely true.

He can’t do it.

So: as a researcher or as counter-misinformation practitioner — or, more generally, as someone who wants to counter the spread of bad information, what could one realistically do?

Well, we know, now, as most of you do, that there are effective ways and ineffective ways of fact-checking… or claim reviewing.

One of the most effective ways, in part because it relies on the same algorithm that tend to get us in trouble, is the SIFT method.

TRACE CLAIMS TO THEIR ORIGINAL CONTEXT

Instead of a top-down “look for experts only” model, or a link to an official report… this practice exercises the mind, leading consumers of information from a starting point of a false claim, upward, out of the rabbit hole, and allows them to use their own skills of discernment to make better conclusions. As you know, media literacy as a method can seem quite offensive to people who spread misinformation, because many of them are sophisticated consumers and processors of information.

Well, SIFT is different. The idea is that if you can very quickly direct someone to a more broadly accessible source of information that is different, quickly, the person can, on her or his own, come to a more accurate conclusion with FEWER sources of information… rather than MORE sources.

I like it because it incorporates mindfulness into this intervention.

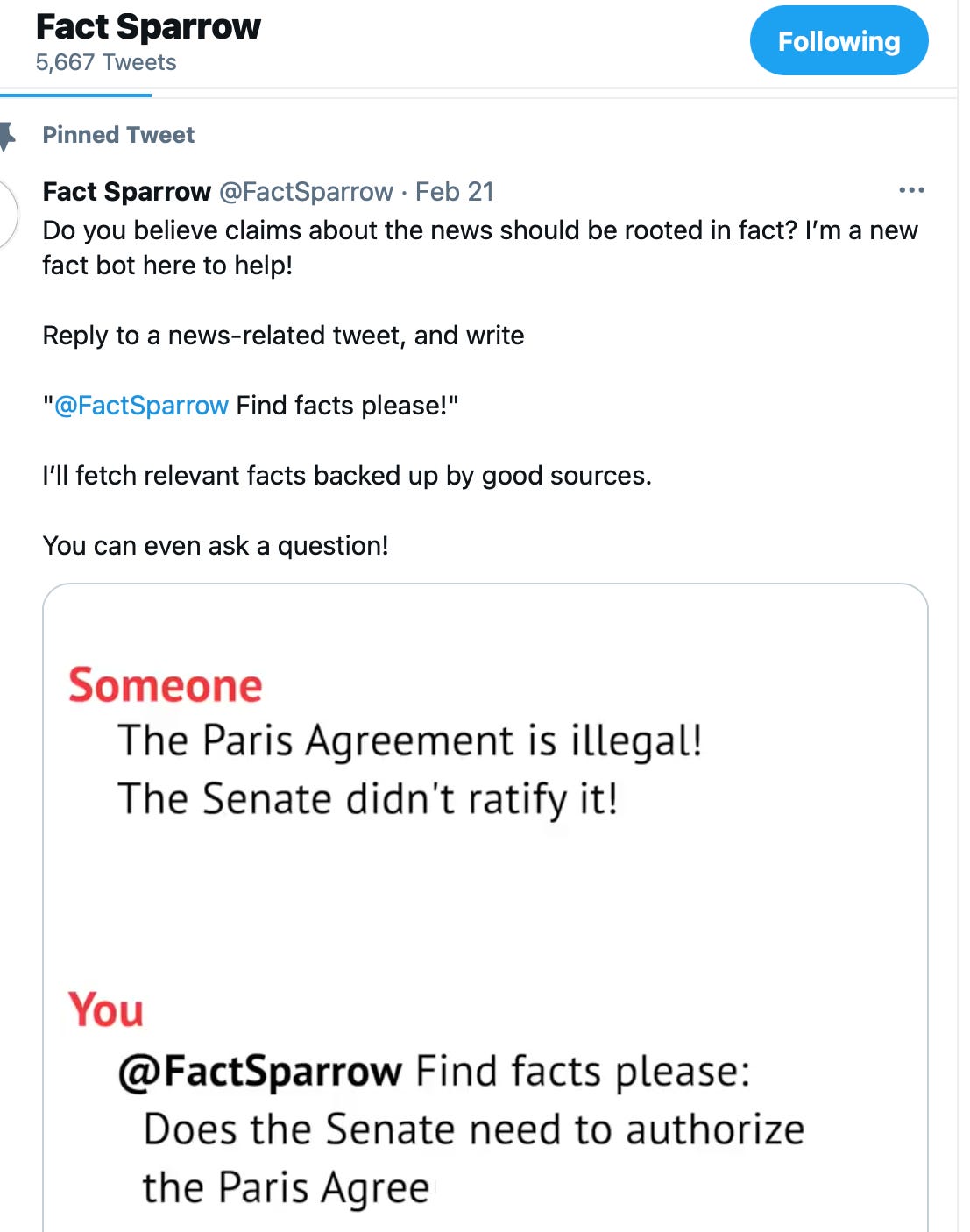

Before the election I did some work with a company called Repustar. It’s a benefit corporation that’s working to engineer fact checking into our social experiences. They built a neat widget called Fact Sparrow. You can ask Fact Sparrow to be test a claim by @ at it during a public conversation.

public conversation.

There are other examples of this functionality; Twitter is creating its own.

But this was the one most available to me.

On Twitter, all of you have to do to pull in a pre-cooked fact check, one that contains a direct outside link, is to @ -- the @factsparrow.

So I wondered: what would it take to add this functionality into Telegram?

Well, FactSparrow as an account would have be added to the group by the moderator.

There would have to be some persuasion and rapport building by someone who understands the technology and is committed to spending that time working with the group.

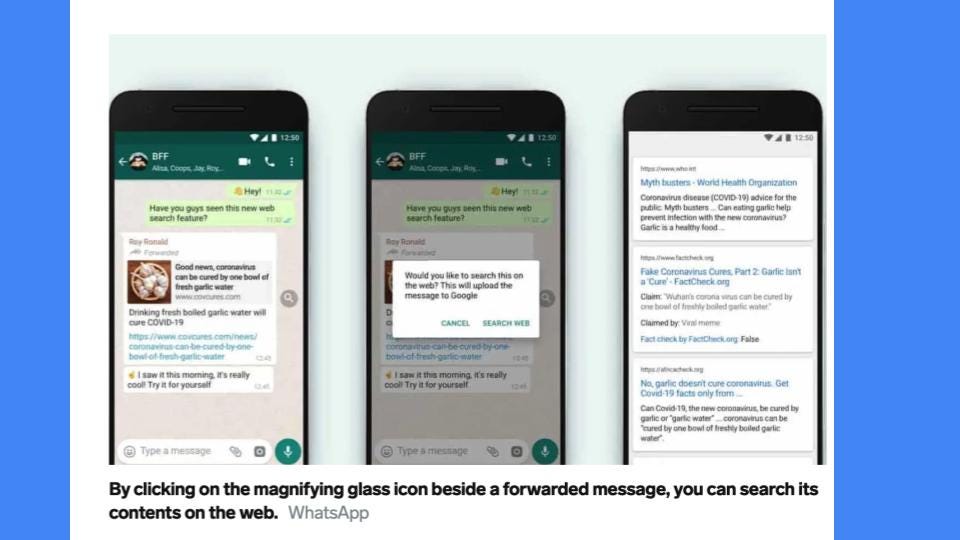

The other way, of course, is to convince these apps to build this function into their ecosystem, one of many trusted fact-checking or claim-reviewing or SIFT-provoking technology prompts.

It won’t compromise the core value of encryption. But it would show a committment to allow people inside of closed groups to access other sources of information.

It is a compromise that allows us to attempt to build a consensus about a truthful claim operating within an environment that distrusts truth claims.

But it is not something we cannot do without the corporations involved here. They have to make the decision whether to contribute to a rebuilding of trustworthiness, or pretend to stay above the fray by their silence, which, in many cases, because it allows mistruths to accumulate, is consent given to malicious falsehoods.

I think it’s an ask worth asking.